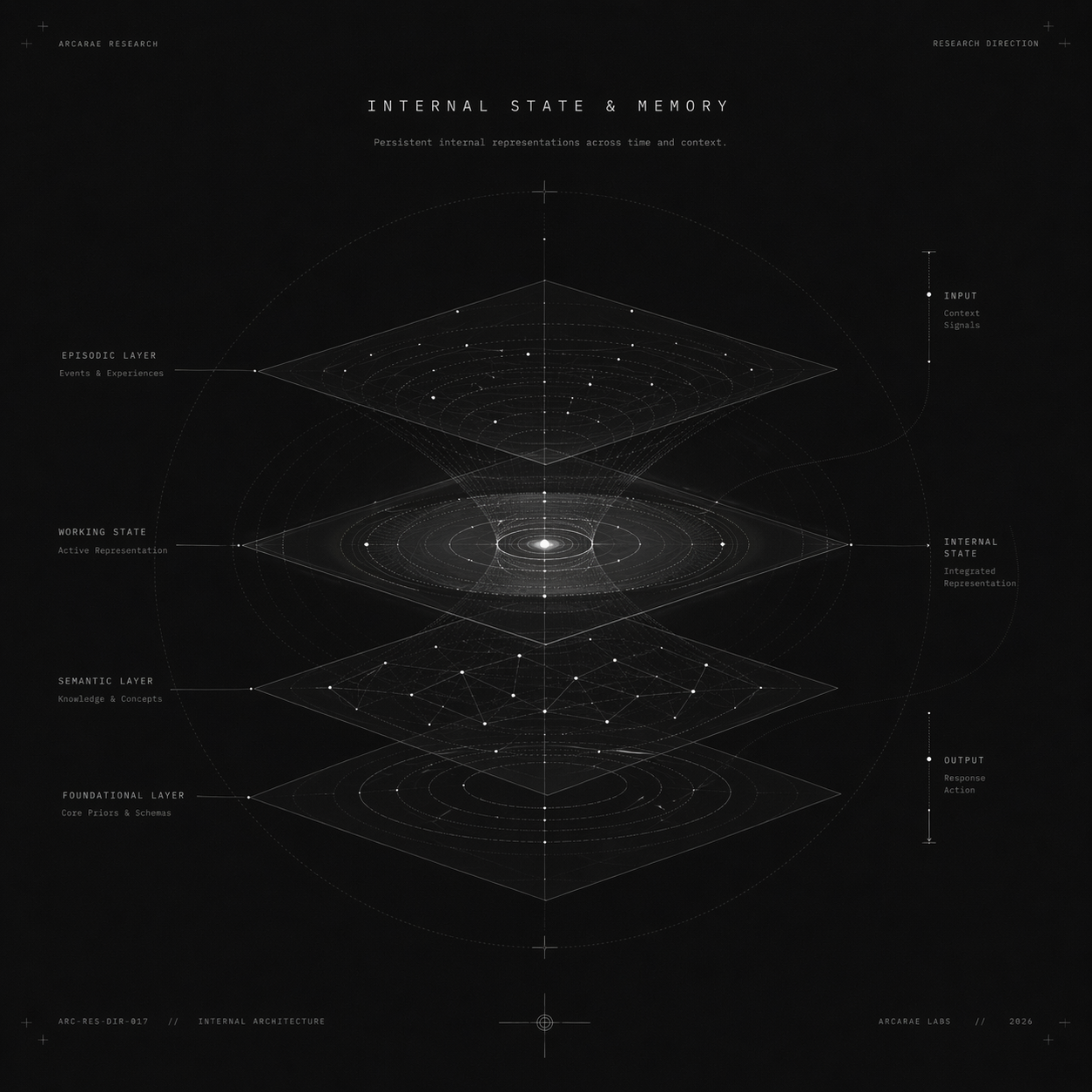

Investigating how language models represent, store, and retrieve information over multiple timescales and contexts.

Studying self-awareness, confidence estimation, and reflective reasoning to enable models that understand their own thinking.

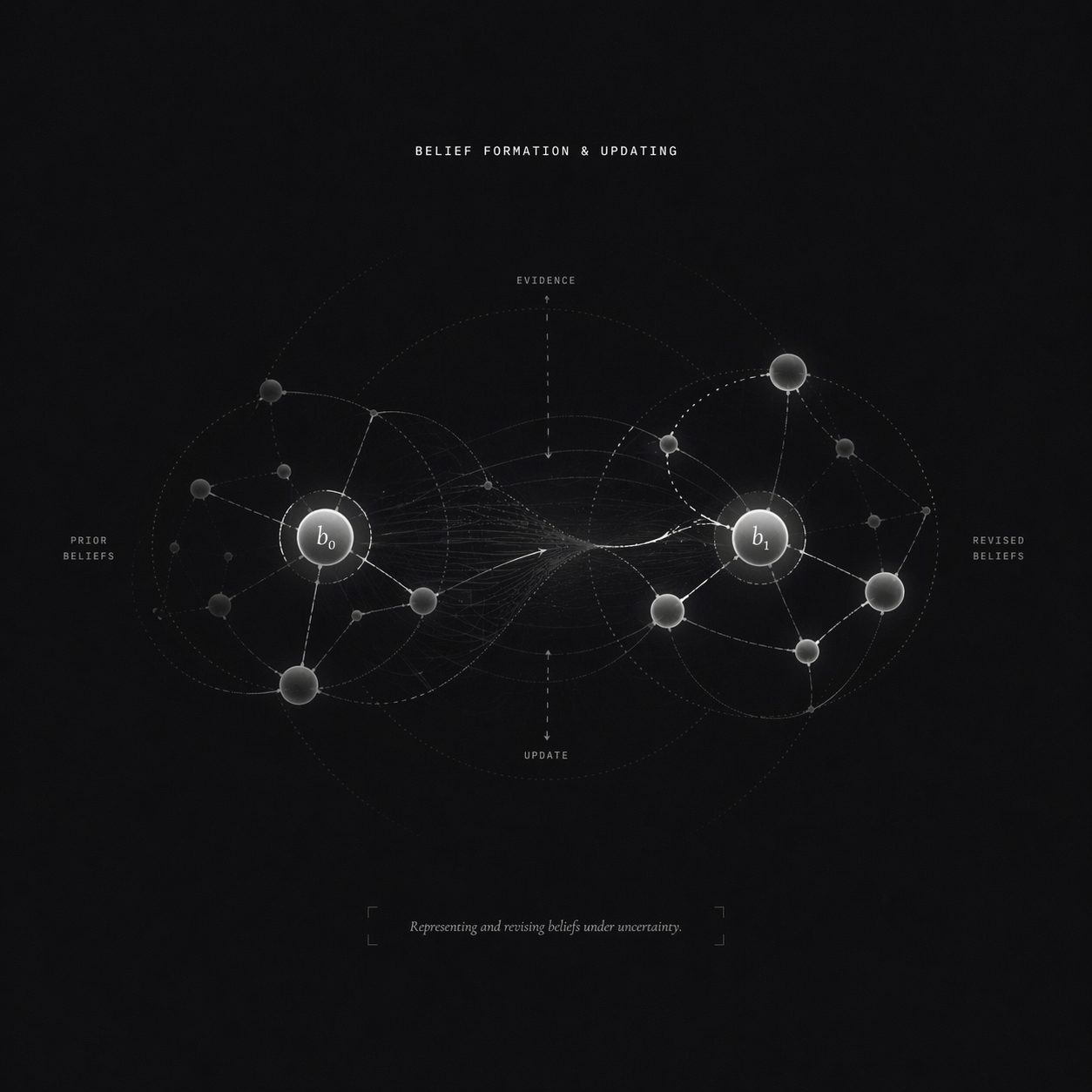

Exploring how models form, revise, and maintain beliefs in the face of uncertainty, evidence, and new information.

Building internal models of the world to support prediction, planning, and counterfactual reasoning.

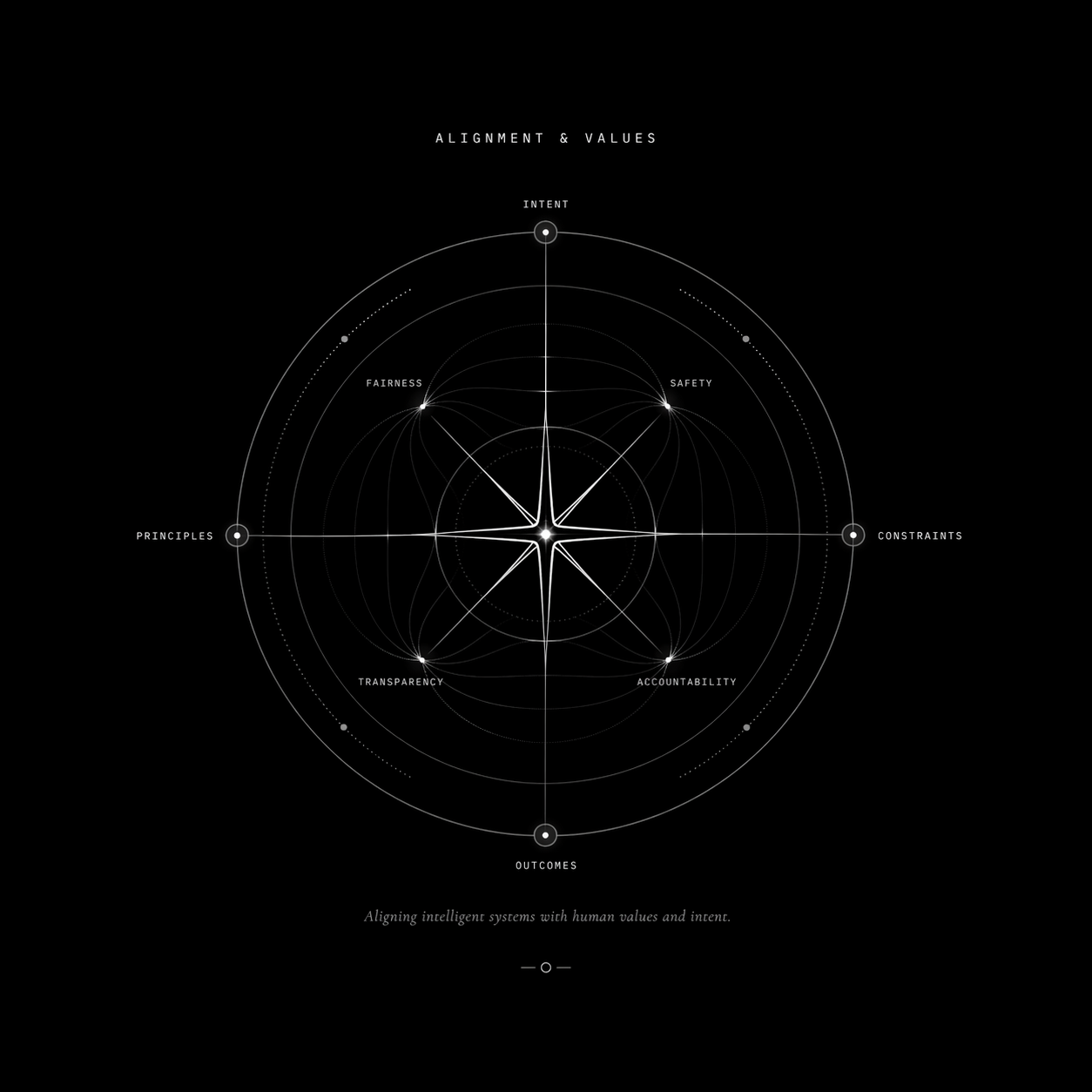

Advancing methods for aligning models with human values, intent, and societal well-being.